Figure 1: Our approach (PDDM) can efficiently and effectively learn complex

dexterous manipulation skills in both simulation and the real world. Here, the

learned model is able to control the 24-DoF Shadow Hand to rotate two

free-floating Baoding balls in the palm, using just 4 hours of real-world data

with no prior knowledge/assumptions of system or environment dynamics.

Dexterous manipulation with multi-fingered hands is a grand challenge in

robotics: the versatility of the human hand is as yet unrivaled by the

capabilities of robotic systems, and bridging this gap will enable more general

and capable robots. Although some real-world tasks (like picking up a

television remote or a screwdriver) can be accomplished with simple parallel

jaw grippers, there are countless tasks (like functionally using the remote to

change the channel or using the screwdriver to screw in a nail) in which

dexterity enabled by redundant degrees of freedom is critical. In fact,

dexterous manipulation is defined as being object-centric, with the goal

of controlling object movement through precise control of forces and motions

— something that is not possible without the ability to simultaneously impact

the object from multiple directions. For example, using only two fingers to

attempt common tasks such as opening the lid of a jar or hitting a nail with a

hammer would quickly encounter the challenges of slippage, complex contact

forces, and underactuation. Although dexterous multi-fingered hands can indeed

enable flexibility and success of a wide range of manipulation skills, many of

these more complex behaviors are also notoriously difficult to control: They

require finely balancing contact forces, breaking and reestablishing contacts

repeatedly, and maintaining control of unactuated objects. Success in such

settings requires a sufficiently dexterous hand, as well as an intelligent

policy that can endow such a hand with the appropriate control strategy. We

study precisely this in our work on Deep Dynamics Models for Learning Dexterous

Manipulation.

Continue

Kourosh Hakhamaneshi

Sep 26, 2019

In this post, we share some recent promising results regarding the applications

of Deep Learning in analog IC design. While this work targets a specific

application, the proposed methods can be used in other black box optimization

problems where the environment lacks a cheap/fast evaluation procedure.

So let’s break down how the analog IC design process is usually done, and then

how we incorporated deep learning to ease the flow.

Continue

Adam Stooke

Sep 24, 2019

UPDATE (15 Feb 2020): Documentation is now available for rlpyt! See it at

rlpyt.readthedocs.io. It describes program flow, code organization, and

implementation details, including class, method, and function references for

all components. The code examples still introduce ways to run experiments, and

now the documentation is a more in-depth resource for researchers and

developers building new ideas with rlpyt.

Since the advent of deep reinforcement learning for game play in 2013, and

simulated robotic control shortly after, a multitude of new algorithms

have flourished. Most of these are model-free algorithms which can be

categorized into three families: deep Q-learning, policy gradients, and Q-value

policy gradients. Because they rely on different learning paradigms, and

because they address different (but overlapping) control problems,

distinguished by discrete versus continuous action sets, these three families

have developed along separate lines of research. Currently, very few if any

code bases incorporate all three kinds of algorithms, and many of the original

implementations remain unreleased. As a result, practitioners often must

develop from different starting points and potentially learn a new code base

for each algorithm of interest or baseline comparison. RL researchers must

invest time reimplementing algorithms–a valuable individual exercise but one

which incurs redundant effort across the community, or worse, one that presents

a barrier to entry.

Yet these algorithms share a great depth of common reinforcement learning

machinery. We are pleased to share rlpyt, which leverages this commonality

to offer all three algorithm families built on a shared, optimized

infrastructure, in one repository. Available from BAIR at

https://github.com/astooke/rlpyt, it contains modular implementations of

many common deep RL algorithms in Python using Pytorch, a leading deep learning

library. Among numerous existing implementations, rlpyt is a more

comprehensive open-source resource for researchers.

Continue

We introduce Bit-Swap, a scalable and effective lossless data compression

technique based on deep learning. It extends previous work on practical

compression with latent variable models, based on bits-back coding and

asymmetric numeral systems. In our experiments Bit-Swap is able to beat

benchmark compressors on a highly diverse collection of images. We’re releasing

code for the method and optimized models such that people can explore and

advance this line of modern compression ideas. We also release a demo and

a pre-trained model for Bit-Swap image compression and decompression on your

own image. See the end of the post for a talk that covers how bits-back coding

and Bit-Swap works.

Continue

Nicholas Carlini

Aug 13, 2019

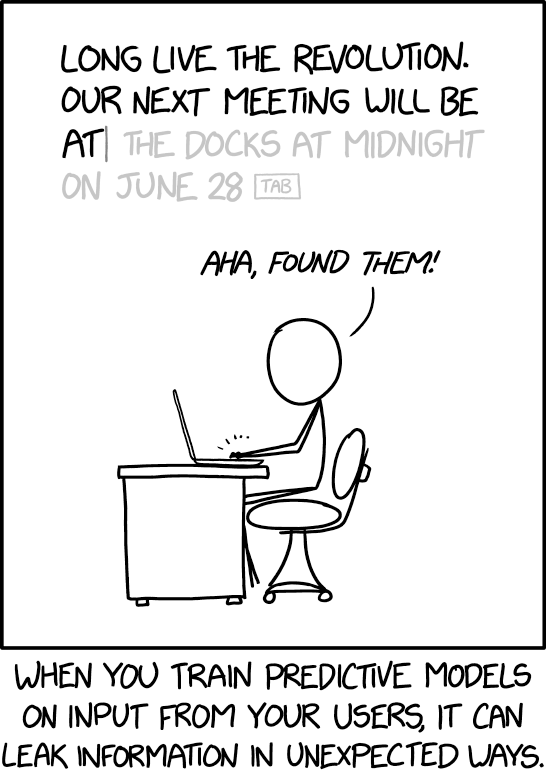

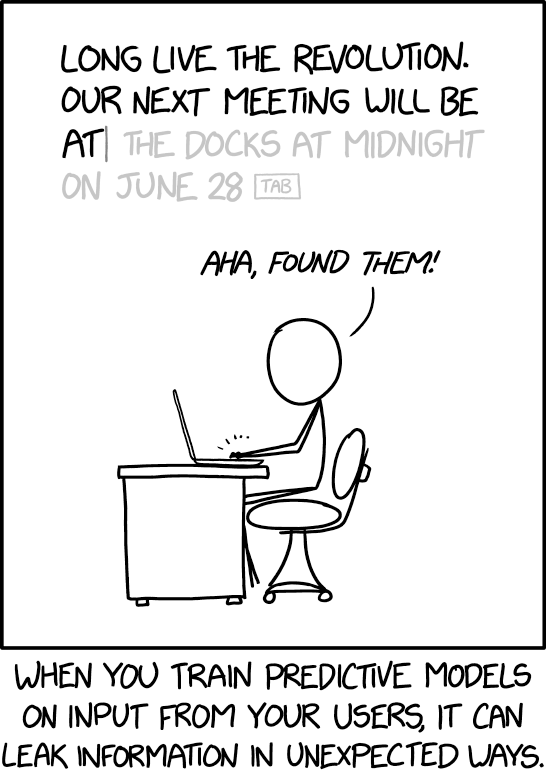

It is important whenever designing new technologies to ask “how will this

affect people’s privacy?” This topic is especially important with regard to

machine learning, where machine learning models are often trained on sensitive

user data and then released to the public. For example, in the last few years

we have seen models trained on users’ private emails, text

messages,

and medical records.

This article covers two aspects of our upcoming USENIX Security

paper that investigates to what extent

neural networks memorize rare and unique aspects of their training data.

Specifically, we quantitatively study to what extent following

problem actually occurs in practice:

Continue

To operate successfully in a complex and changing environment, learning agents must be able to acquire new skills quickly.

Humans display remarkable

skill in this area — we can learn to recognize a new object from one example,

adapt to driving a different car in a matter of minutes, and add a new slang

word to our vocabulary after hearing it once. Meta-learning is a promising

approach for enabling such capabilities in machines. In this paradigm, the

agent adapts to a new task from limited data by leveraging a wealth of

experience collected in performing related tasks. For agents that must take

actions and collect their own experience, meta-reinforcement learning (meta-RL)

holds the promise of enabling fast adaptation to new scenarios. Unfortunately,

while the trained policy can adapt quickly to new tasks, the meta-training

process requires large amounts of data from a range of training tasks,

exacerbating the sample inefficiency that plagues RL algorithms. As a result,

existing meta-RL algorithms are largely feasible only in simulated

environments. In this post, we’ll briefly survey the current landscape of

meta-RL and then introduce a new algorithm called PEARL that drastically

improves sample efficiency by orders of magnitude. (Check out the research paper and the code.)

Continue

Daniel Ho, Eric Liang, Richard Liaw

Jun 7, 2019

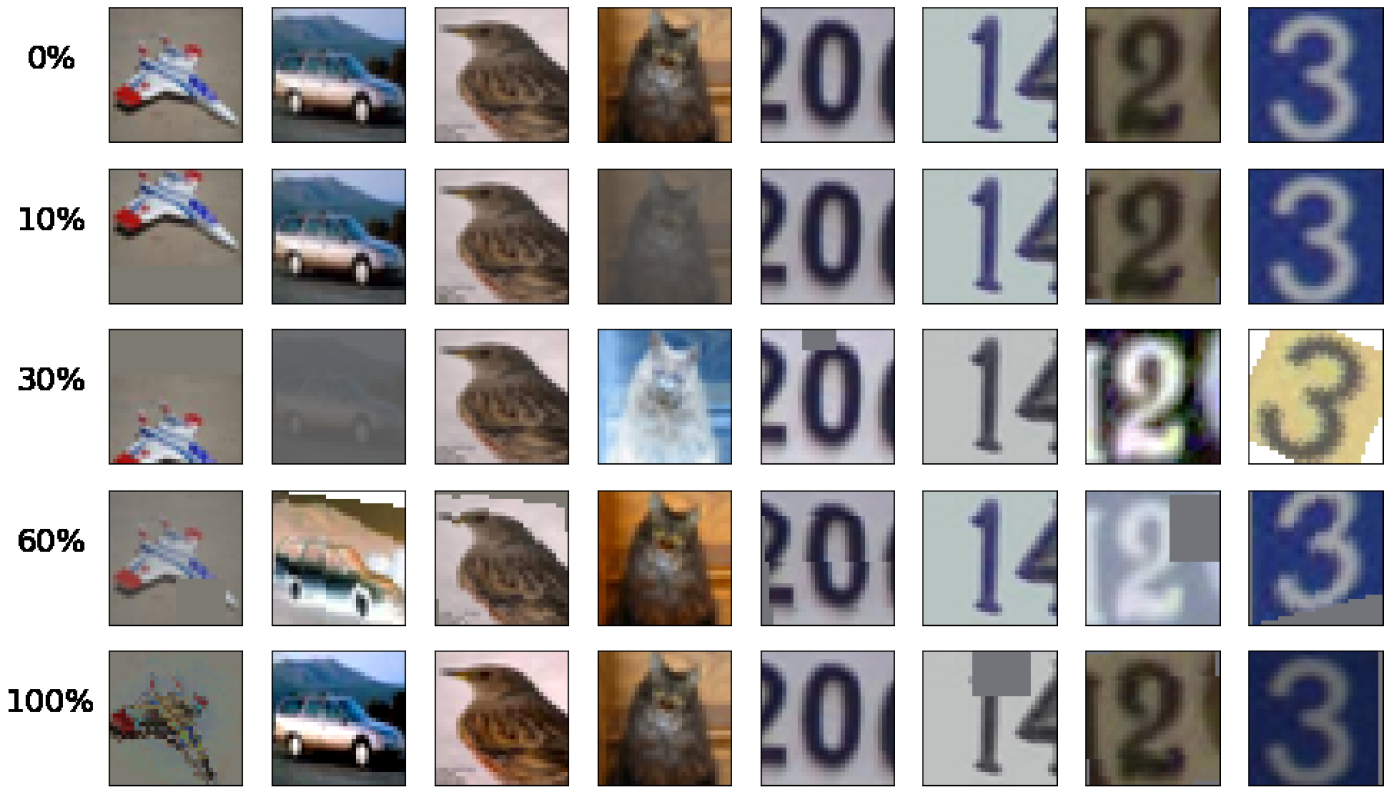

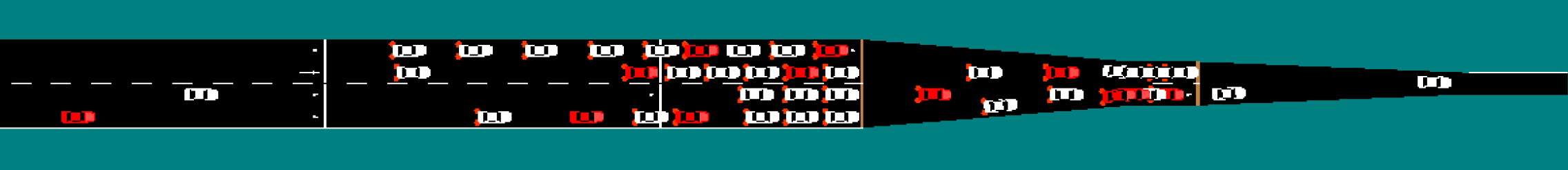

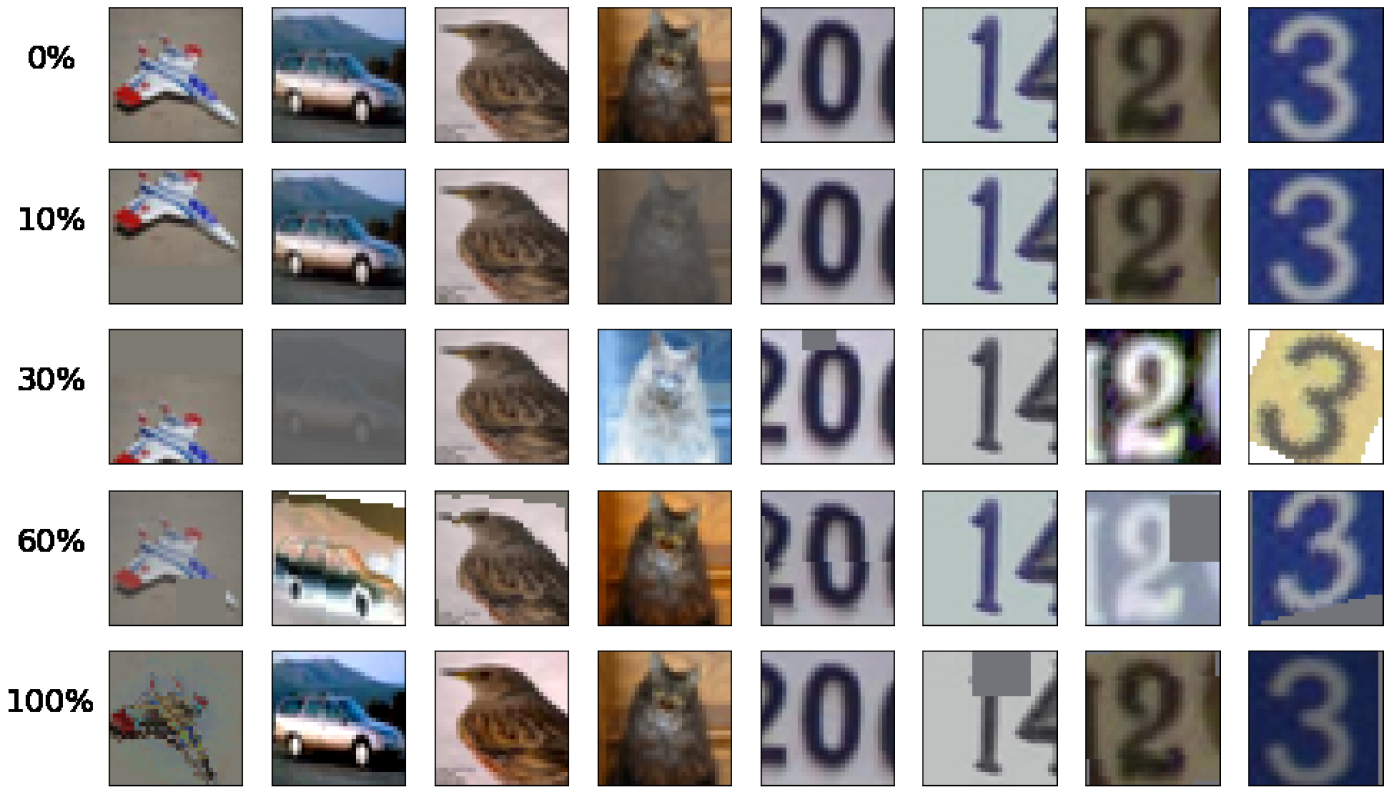

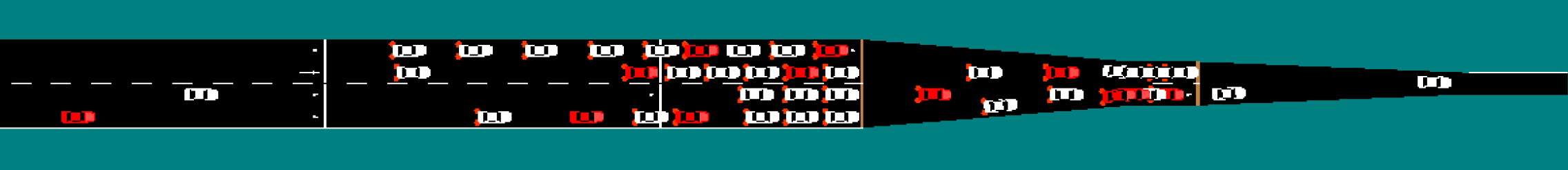

Effect of Population Based Augmentation applied to images, which differs at different percentages into training.

In this blog post we introduce Population Based Augmentation (PBA), an

algorithm that quickly and efficiently learns a state-of-the-art approach to

augmenting data for neural network training. PBA matches the previous best

result on CIFAR and SVHN but uses one thousand times less

compute, enabling researchers and practitioners to effectively learn

new augmentation policies using a single workstation GPU. You can use PBA

broadly to improve deep learning performance on image recognition tasks.

We discuss the PBA results from our recent paper and then show how

to easily run PBA for yourself on

a new data set in the Tune framework.

Continue

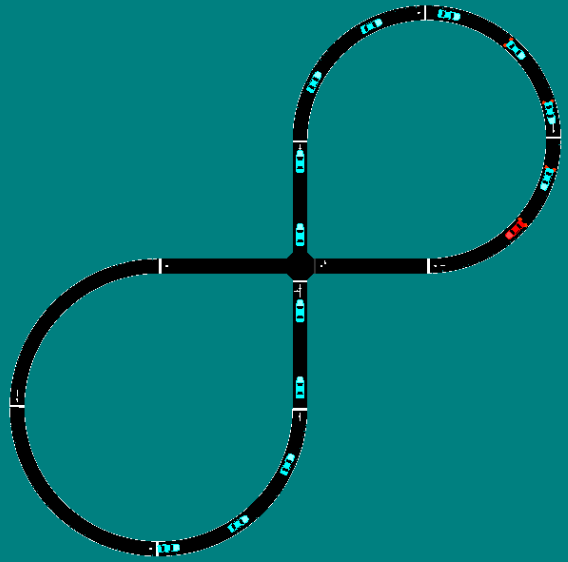

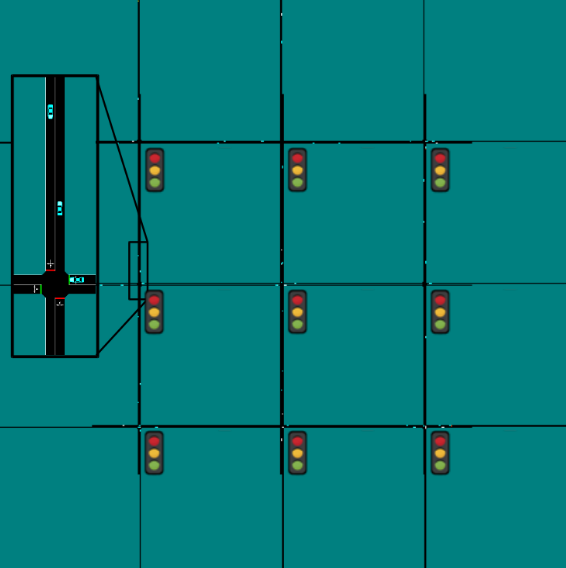

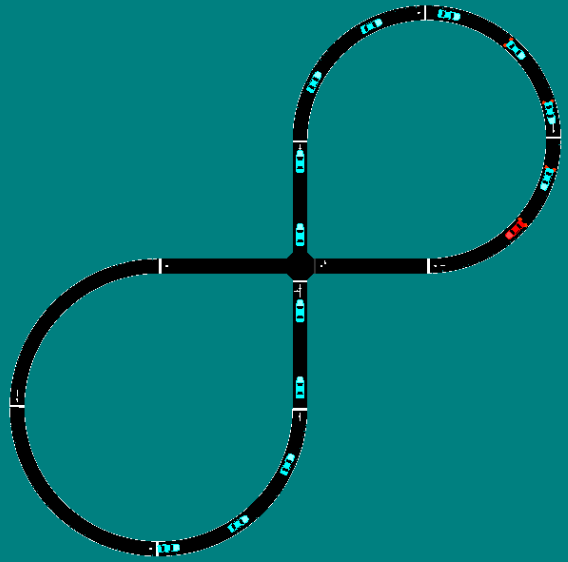

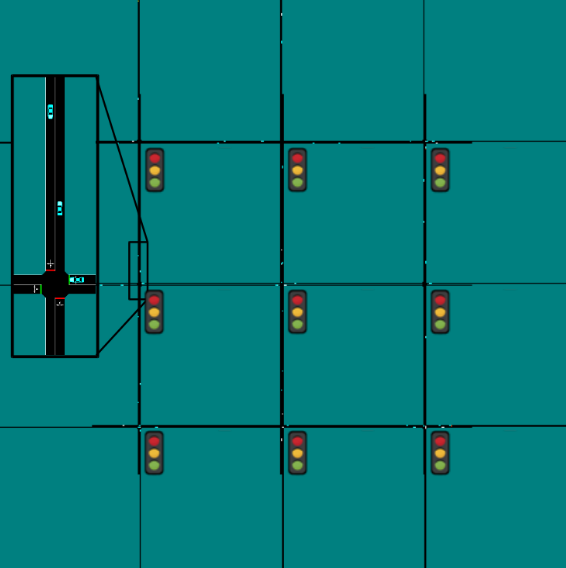

Eugene Vinitsky

Jun 3, 2019

We are in the midst of an unprecedented convergence of two rapidly growing

trends on our roadways: sharply increasing congestion and the deployment of

autonomous vehicles. Year after year, highways get slower and slower: famously,

China’s roadways were paralyzed by a two-week long traffic jam in 2010. At the

same time as congestion worsens, hundreds of thousands of semi-autonomous

vehicles (AVs), which are vehicles with automated distance and lane-keeping

capabilities, are being deployed on highways worldwide. The second trend offers

a perfect opportunity to alleviate the first. The current generation of AVs,

while very far from full autonomy, already hold a multitude of advantages over

human drivers that make them perfectly poised to tackle this congestion. Humans

are imperfect drivers: accelerating when we shouldn’t, braking aggressively,

and make short-sighted decisions, all of which creates and amplifies patterns

of congestion.

Continue

Communicating the goal of a task to another person is easy: we can use language, show them an image of the desired outcome, point them to a how-to video, or use some combination of all of these. On the other hand, specifying a task to a robot for reinforcement learning requires substantial effort. Most prior work that has applied deep reinforcement learning to real robots makes uses of specialized sensors to obtain rewards or studies tasks where the robot’s internal sensors can be used to measure reward. For example, using thermal cameras for tracking fluids, or purpose-built computer vision systems for tracking objects. Since such instrumentation needs to be done for any new task that we may wish to learn, it poses a significant bottleneck to widespread adoption of reinforcement learning for robotics, and precludes the use of these methods directly in open-world environments that lack this instrumentation.

We have developed an end-to-end method that allows robots to learn from a modest number of images that depict successful completion of a task, without any manual reward engineering. The robot initiates learning from this information alone (around 80 images), and occasionally queries a user for additional labels. In these queries, the robot shows the user an image and asks for a label to determine whether that image represents successful completion of the task or not. We require a small number of such queries (around 25-75), and using these queries, the robot is able to learn directly in the real world in 1-4 hours of interaction time, resulting in one of the most efficient real-world image-based robotic RL methods. We have open-sourced our implementation.

Our method allows us to solve a host of real world robotics problems from pixels in an end-to-end fashion without any hand-engineered reward functions.

Continue

Imagine a robot trying to learn how to stack blocks and push objects using

visual inputs from a camera feed. In order to minimize cost and safety

concerns, we want our robot to learn these skills with minimal interaction

time, but efficient learning from complex sensory inputs such as images is

difficult. This work introduces SOLAR, a

new model-based reinforcement learning (RL) method that can learn skills –

including manipulation tasks on a real Sawyer robot arm – directly from

visual inputs with under an hour of interaction. To our knowledge, SOLAR is the

most efficient RL method for solving real world image-based robotics tasks.

Our robot learns to stack a Lego block and push a mug onto a coaster with only

inputs from a camera pointed at the robot. Each task takes an hour or less of

interaction to learn.

Continue